NobuyukiOishi

Engineer, researcher, and maker of things that work.

I believe technology can and should make people more aware, more active, more alive. This conviction is what drives my research in human sensing × AI.

Engineer, researcher, and maker of things that work.

I believe technology can and should make people more aware, more active, more alive. This conviction is what drives my research in human sensing × AI.

Hi there, I'm Nobu!

I am originally from Shizuoka, Japan. I received my B.Eng and M.Eng from UEC Tokyo, and recently completed my PhD at the University of Sussex under the supervision of Prof. Daniel Roggen.

I am a hands-on researcher who loves translating ideas into reality. I enjoy the process of developing systems end-to-end—from getting my hands dirty with raw data collection and pre-processing, to training machine learning models and deploying them in the real world. I find joy in watching it all finally click into place.

Lately, I have been diving deep into how we can use LLMs and AI agents to accelerate research and development. If you are working on something similar, or just want to chat about the intersection of AI, wearables, and wellness, I'd love to connect!

Traveling around Japan by bicycle. Cape Sōya, the northernmost point in Japan.

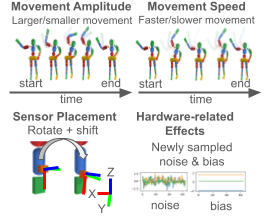

Collecting and annotating IMU data for human activity recognition is costly and hard to scale. We address this through Physically Plausible Data Augmentation — physics-informed augmentation enabled by WIMUSim, a differentiable simulation framework that minimises the gap between virtual and real IMU signals via gradient-based optimisation. Evaluated across supervised and self-supervised settings, PPDA consistently outperforms conventional augmentation, enabling more efficient and ethically responsible use of human-subject data.

"Can earables detect Freezing of Gait (FoG)?" Earables are ideally positioned to sense FoG and deliver immediate audio feedback — making them a promising assistive tool for Parkinson's disease patients. To explore this without burdening vulnerable patients with new data collection, we simulated earable IMU signals from existing VR motion capture data — the first simulation-based proof-of-concept for FoG detection with earables.

How robust are HAR models to real-world variabilities — different sensor placements, mixed hardware, different users? We collected a multimodal dataset capturing controlled walking and uncontrolled salad preparation across ages 20s–70s, to benchmark deep learning models' robustness against such variabilities. Using this dataset, we co-organised the "From Labels to Text" Open-ended Idea Challenge at ABC 2026.

I'm always happy to talk — about research, collaboration, or whatever's on your mind.